|

Social cognition begins with

social perception, and social perception has the same purpose

as nonsocial perception:

Accordingly, the social

perceiver has four fundamental

tasks:

A Brief History of CausalityPhilosophical analyses of judgments of causality go back at least as far as Aristotle. As a first

pass, we should distinguish between two kinds

of relations between cause and effect:

Aristotle

taught that knowledge of a thing requires

description, classification, and causal

explanation. He distinguished among four

types of causality:

Hume provided

the classic analysis of the conditions for

inferring causality:

Mill's methods

for inferring causality:

Koch's

Postulates for establishing the cause of an

infectious disease:

Causal

inference in epidemiology (Bradford Hill,

1965). Example; smoking and health.

Stephen Kern traces the history of our understanding of the causes of human behavior through an analysis of novelistic depictions of murder in A Cultural History of Causality (Princeton University Press, 2004). |

In a sense, all of science is about establishing causality, and that goes for psychology as well as physics and biology. What causes us to perceive and remember things the way we do? What causes individual differences in personality? But as Heider (1944, 1958) noted, in social cognition the problem of causal attribution is one of phenomenal causality -- not so much what actually causes events to occur (which is a problem for behavioral and social science), but rather the "naive" social perceiver's intuitive, subjective beliefs about causal relations.

The empirical study of causal inferences begins with work by Michotte (1946, 1950), who asked subjects to view short animated films depicting interactions among colored disks.

In Demonstration 1, the red disk moves into contact with the blue disk, which moves immediately.

In this case, subjects typically believed that the movement of the red disk triggered the movement of the blue disk -- what Michotte termed the launching effect. When the red disk contacted the blue disk, as in Demonstration 2, but the blue disk moved only after some delay, the launching effect diminished.

Subjects also perceive causal relations when the two disks move together, even when they do not touch.

Michotte termed this the entrainment effect. In Demonstration 3, the larger red disk tends to be perceived as chasing the smaller blue disk, and the blue disk as running away. In Demonstration 4, the larger red disk tends to be perceived as leading the smaller blue disk, and the blue disk as following. Note the ascription --the attribution of human motives -- chasing and running away, leading and following -- to these inanimate objects.

While Hume argued that

causality was inferred from such stimulus features as

spatiotemporal contiguity, Michotte argued that causality

was perceived directly, without need for inferences (much

as Gibson proposed that such properties as distance and

motion were perceived directly, without the involvement of

any "higher" cognitive processes). But in the

present context, Michotte's most interesting observations

concerned the attribution of mental states to inanimate

objects. When ordinary people describe causality,

they very often do so in anthropomorphic terms,

ascribing human motives to nonhuman objects.

Phenomenal causality is very often experienced in social

terms.

Still, Michotte was not a

social psychologist, and he was not particularly

interested in social cognition. Within social

psychology, the study of phenomenal causality, and of

causal attribution, really begins with Fritz Heider (1944,

1958). Like Lewin and Asch, Heider (1896-1988) was a

refugee from Hitler's Europe; and like Lewin and Asch,

Heider was greatly influenced by the Gestalt school of

psychology.

Echoing Lewin's formula, B = f(P, E), Heider argued that there were two possible causes of behavior:

In this way, as we will see, Heider set the stage for a major line of theorizing about causal attribution.

Unlike Lewin, however, he was not concerned about the actual causes of behavior, but rather the perceived causes. Determining the actual causes of behavior is the job of a professional psychologist, and (from Heider's point of view) social cognition is concerned with how the naive, intuitive, "lay" psychologist judges causes. So how does a person go about making attributions of causality?

Heider's own solution was the statement that "Behavior engulfs the field". Because we have no direct access to another's mental states, information about persons is provided by their behavior. Thus, the perceptual field consists of the actor and his or her behavior.

Heider further noted a general tendency for people to ascribe behavior to the actor. On the assumption that actors intend the outcomes of their actions, once an action has been categorized, the intention is given; and then the intention itself is derived from stable traits and other characteristics of the person.

This formulation is an extension of the Gestalt principle that "the whole is greater than the sum of its parts". For Heider, the actor and the act are perceived as a unit, joined together by perceived cause and effect, in such a way that the actor is perceived as the cause of his or her action.

Note, too, that for Heider explanation stops at the psychological level of analysis. For Heider, Sue struck John because she was angry. There is no reduction to underlying physiological processes, which would involve an infinite regress of causal explanations. Heider is concerned with phenomenal causality, and with explanations in terms of "folk" or "common-sense" psychology.

Like Michotte, Heider employed a film

to explore phenomenal causality. In the film, two

triangles -- one large (T) and one small (t)

-- and a small circle (c) move in and out of a

rectangular enclosure. But Heider noticed that his

subjects typically described the film in terms of a "plot"

that attributed feelings and desires to the objects.

In other words, subjects organized the chaos of the film

by attributing intentionality to the objects -- giving the

film a narrative structure in which the objects behaved in

accordance with motives and desires.

Like Michotte, Heider employed a film

to explore phenomenal causality. In the film, two

triangles -- one large (T) and one small (t)

-- and a small circle (c) move in and out of a

rectangular enclosure. But Heider noticed that his

subjects typically described the film in terms of a "plot"

that attributed feelings and desires to the objects.

In other words, subjects organized the chaos of the film

by attributing intentionality to the objects -- giving the

film a narrative structure in which the objects behaved in

accordance with motives and desires.

Link to the Heider/Simmel animation on YouTube.

Here is Heider's own

description of the film (Heider & Simmel, 1944, p. 245):

Or:

The Michotte

and Heider-Simmel animations undoubtedly set the stage for The Red

Balloon (1956), a French

short feature directed by Albert Lamorisse. The film portrays the adventures of a

little boy (played by the director's son, Pascal) who finds a balloon whose movements

have all the hallmarks of sentience and

agency. The film won the

Palme d;Or for short films at the Cannes Film

Festival, and an Oscar for best original

screenplay.

The Michotte

and Heider-Simmel animations undoubtedly set the stage for The Red

Balloon (1956), a French

short feature directed by Albert Lamorisse. The film portrays the adventures of a

little boy (played by the director's son, Pascal) who finds a balloon whose movements

have all the hallmarks of sentience and

agency. The film won the

Palme d;Or for short films at the Cannes Film

Festival, and an Oscar for best original

screenplay.

Naive Analysis of Action

For Heider (1958), the

key to interpersonal relations is the perception of other

people's behavior. We know what the other person

(P) does; how we respond will depend on why we think

s/he did it. Following "Lewin's grand truism", B = f(P,

E), Heider believed that people attributed individual behavioral

acts to relatively unchanging dispositional properties.

But unlike some later interpretations, "disposition" did not,

for Heider, refer only to personal dispositions,

such as personality traits; there were also environmental

dispositions -- features of the environment that tended to

elicit certain behaviors.

The motivational

factor, in turn, creates a distinction between personal

and impersonal causality.

Even in cases of personal causality, though, Heider thought that naive observers distinguished among various levels of responsibility.

Although this is all a theoretical analysis, a study by Shaw & Sulzer (1964) largely verified these predictions.

For more details of Heider's analysis of the naive analysis of action, see Shaw and Costanzo, Theories of Social Psychology (1e, 1970), from which this account is taken.

The first formal theory of

causal attribution was proposed by Jones and Davis (1965),

as an elaboration on Heider's principles.

Their correspondent inference theory is

based on what they called the action-attribute

paradigm:

In this way, we make correspondent inferences -- that people's actions correspond to their intentions, and that their intentions correspond to their personal qualities, namely traits and attitudes. In correspondent inferences, an act and an attribute are similarly described -- e.g., a dominant person behaves in a dominant manner. This is known as the attribute-effect linkage:

Given an attribute-effect linkage which is offered to explain why an act occurred, correspondence increases as the judged value of the attribute departs from the judge's conception of the average person's standing on that attribute.

Thus, correspondent inferences are predicated on implicit personality theory -- the observer's intuitions about trait-behavior relations, and about how the "average person" would behave.

Jones and Davis understood

that acts are not performed in isolation, and that usually

actors have a choice between plausible alternatives.

In making causal attributions, people pay attention to:

Noncommon effects are assumed to reflect the actor's intended outcomes, and so correspond to his dispositions.

Jones and Davis argued that correspondence is greater for undesirable effects, which can't be predicted from knowledge of social norms, and which provide information about the dispositions of the individual.

Later, Jones & McGillis (1976) substituted expectancies for desirability. That is, correspondence is greater for unexpected effects; expected effects can be predicted from social norms.

|

"Since no one

is to blame, I demand no explanation" |

The first

empirical test of correspondent inference theory was

reported by Jones and Harris (1967). Their study

employed an attitude-attribution paradigm in which

subjects were asked to judge the attitude of another

person based on the target's expressed opinion and the

context in which the expression occurred. Debaters

gave speeches that either favored or opposed recognition

of the Castro regime in Cuba, or favored or opposed racial

segregation (note that in both cases, at the time, the

"con" side was normative). The judges were further

informed that the speeches were made under two conditions:

choice (the debater could choose which side he

would present) or no choice (the debater was

assigned a position by his coach).

In

the Castro debate, pro-Castro speakers were rated as

actually favoring Castro. This was especially true

in the choice condition, but it was also true in the

no-choice condition. Here is a good example of the

role of desirability and expectation: in the United States

in 1967, even among college students, favoring Castro was

both undesirable and unexpected. So, speakers who

favored Castro were rated as actually having pro-Castro

attitudes.

In

the Castro debate, pro-Castro speakers were rated as

actually favoring Castro. This was especially true

in the choice condition, but it was also true in the

no-choice condition. Here is a good example of the

role of desirability and expectation: in the United States

in 1967, even among college students, favoring Castro was

both undesirable and unexpected. So, speakers who

favored Castro were rated as actually having pro-Castro

attitudes.

The results

for the segregation debate were a little more complex, and

depended on whether the debater was characterized as from

the North or the South. Pro-segregation speakers

were generally rated as favoring segregation -- especially

if they had a choice, or came from the North. Again,

though we see the role of desirability and

expectation. Given the stereotypes about the

American North and South that prevailed in 1967, we would

expect Northerners to oppose racial segregation;

Southerners might support racial segregation, or they

might simply say they do, in order to conform with

local (Southern) norms. But a Northerner, who

advocates racial segregation, is doing something that is

both undesirable and unexpected, from a "Northern" point

of view. Therefore, the inference is that

Northerners who spoke favorably about racial segregation

really did favor the practice.

The results

for the segregation debate were a little more complex, and

depended on whether the debater was characterized as from

the North or the South. Pro-segregation speakers

were generally rated as favoring segregation -- especially

if they had a choice, or came from the North. Again,

though we see the role of desirability and

expectation. Given the stereotypes about the

American North and South that prevailed in 1967, we would

expect Northerners to oppose racial segregation;

Southerners might support racial segregation, or they

might simply say they do, in order to conform with

local (Southern) norms. But a Northerner, who

advocates racial segregation, is doing something that is

both undesirable and unexpected, from a "Northern" point

of view. Therefore, the inference is that

Northerners who spoke favorably about racial segregation

really did favor the practice.

The general finding of this

experiment, then, was that attitudes were attributed in

line with the speaker's behavior.

Later, Lee Ross (1977) would argue that this general ignorance of situational constraints reflected the Fundamental Attribution Error: the general tendency to underestimate the role of situational factors in behavior, and to overestimate the role of personal dispositions. But we're not there yet.

A further analysis of

causal attribution was provided by Harold Kelley (1967,

1971), who also expanded on Heider's insights.

Kelley noted that we do not always simply attribute an

action to the actor's dispositions. Sometimes we

make more complex and subtle causal judgments. Kelley proposed a covariation model of

causal attribution based on the statistical analysis

of variance (ANOVA). He proposed that we infer

causality from multiple observations of behavior, just as

a formal experiment entails multiple trials.

Based on Lewin --

everything in social psychology goes back to Lewin!

-- he argued that there are 3 principal causes of

behavior:

Following the ANOVA model, Kelly also argued that it was possible to have joint causes -- for example, an interaction between the actor and the target.

From multiple observations

of behavior, Kelley proposed that we extract three kinds

of information relevant to causal attribution:

In making causal attributions, Kelley argued that the judge performs a "naive experiment" in which each type of data varies one principal cause while keeping the others constant. By observing all 8 possible combinations (2x2x2), the judge can determine which element covaries with the behavior, and then infer that this element is the cause of the behavior.

Consider, for example, the

following observation: John laughed at the comedian.

Depending on the particular pattern of consensus,

consistency, and distinctiveness information available,

the perceiver would be expected to make different

attributions for John's behavior. (Slide: President

Obama laughing at a White House Correspondents' Dinner).

|

|

|

|

The

result is what we may call (following Brown, 1986) a covariation

calculus for causal attribution -- a framework for

analyzing data about causation.

The

result is what we may call (following Brown, 1986) a covariation

calculus for causal attribution -- a framework for

analyzing data about causation.

In his original paper, Kelley did not actually provide a test of his "calculus". But intuitively it feels right, and everyday observation provides anecdotal evidence that people do seem to use something like it.

In the

movie 10 (1979; directed by Blake Edwards), Dudley

Moore plays George Webber, a Hollywood songwriter who

should be happy in his marriage ((Julie Andrews plays his

wife), but who undergoes a midlife crisis stimulated by a

chance encounter with a beautiful, younger woman (Bo

Derek) who is on her way to her wedding. On impulse,

he follows her to Mexico on her honeymoon. One

evening, in the hotel bar, he runs into Mary Lewis (played

by Dee Wallace), who recognizes him from one of Truman

Capote's parties. They retire to his room, but when

George cannot consummate their affair, the following

conversation ensues as they lie in bed:

In the

movie 10 (1979; directed by Blake Edwards), Dudley

Moore plays George Webber, a Hollywood songwriter who

should be happy in his marriage ((Julie Andrews plays his

wife), but who undergoes a midlife crisis stimulated by a

chance encounter with a beautiful, younger woman (Bo

Derek) who is on her way to her wedding. On impulse,

he follows her to Mexico on her honeymoon. One

evening, in the hotel bar, he runs into Mary Lewis (played

by Dee Wallace), who recognizes him from one of Truman

Capote's parties. They retire to his room, but when

George cannot consummate their affair, the following

conversation ensues as they lie in bed:

| Mary: George? | George: Huh? |

| Mary: Is it me? | George: No. |

| Mary: Yes it is. | George: OK. |

| Mary: Is it? | George: No! |

| Mary: It is me, isn't it? | George: No! |

| Mary: Has it ever happened to you before? | George: [Shakes his head in silence.] |

| Mary:

Well, it's happened to me before!

[She gets up, gathers her clothes, and leaves] |

Mary has determined that the problem happens to her but not to George; covaries with her, holding George constant. Therefore, it is her -- at least, so it seems to her, which is all that matters in the present context.

Here's another example (this one from

Roger Brown, 1986). On December 3, 1979, the rock

group The Who began its American tour with a

concert in Cincinnati, Ohio. The concert sold out --

half of the seats reserved in advance, half of the seats

sold for general admission. When only a few doors

opened, there was a stampede among the general-admission

ticket-holders, resulting in 11 dead. The next

concert, in Providence, Rhode Island, was canceled; and

when the open date was offered to Portland, Maine, the

town refused. On both occasions, politicians and

newspaper editorials blamed The Who and its fans

for the deaths in Cincinnati -- that the sorts of people

who liked The Who were the sorts of people who

would kill each other to get a good seat.

Here's another example (this one from

Roger Brown, 1986). On December 3, 1979, the rock

group The Who began its American tour with a

concert in Cincinnati, Ohio. The concert sold out --

half of the seats reserved in advance, half of the seats

sold for general admission. When only a few doors

opened, there was a stampede among the general-admission

ticket-holders, resulting in 11 dead. The next

concert, in Providence, Rhode Island, was canceled; and

when the open date was offered to Portland, Maine, the

town refused. On both occasions, politicians and

newspaper editorials blamed The Who and its fans

for the deaths in Cincinnati -- that the sorts of people

who liked The Who were the sorts of people who

would kill each other to get a good seat.

The other cities on the tour schedule went ahead with their concerts, and there were no other incidents. At the end of the tour, the general conclusion was that the tragedy was due to the specific situation in Cincinnati: sold-out general admission seats, coupled with the fact that only a few doors were open to allow people into the hall.

Here, again, the determination was that the effect co-varied with the circumstances, holding The Who (and its fans) constant.

More relevant,

however, is actual experimental evidence. A classic

study by Leslie McArthur (1972) -- McArthur is now Leslie

Zebrowitz, the prominent authority on face perception --

constituted the first experimental test of the covariation

calculus. McArtuhur presented subjects with event

scenarios consisting of the description of an event,

accompanied by consistency, distinctiveness, and consensus

information -- to wit:

After reading each

scenario, the subjects were asked to choose among five

alternative causes:

The results confirmed the essential predictions of the covariation calculus.

Here are the

basic elements of the covariation calculus -- or, as Roger

Brown called it, a "pocket calculus" for making causal

attributions.

The covariation calculus is

not all that is involved in causal attribution.

McArthur's and later research determined that there are

other principles operating as well:

Jones and McGillis (1976) noted that both the covariation calculus and correspondent inference theory assume a "naive scientist" -- that perceivers intuitively perform something like an experimental analysis to isolate distinctive causes. In correspondent inference, the "experiment" focuses on noncommon effects, while the covariation calculus focuses on the locus of variance.

In some respects, correspondent inference generates a hypothesis which is then tested by the covariation calculus. If the correspondent inference is correct, then the pattern of consensus, consistency, and distinctiveness information should lead to an attribution to the actor.

But the covariation calculus stops at the level of "something about the actor". It doesn't say what that "something" is. If that is what the pattern of information shows, then correspondent inference goes beyond to make an attribution to some specific trait of the actor.

In the discussion so far, consensus, consistency, and distinctiveness were characterized as dichotomous variables -- high vs. low. But obviously each of these represents a continuously graded dimension.

Additional dimensions were added by Bernard Weiner (1971), a colleague of Kelley's at UCLA, who was interested in causal attributions for success and failure as part of his cognitive theory of achievement motivation.

In Weiner's view, people's desire to work hard

at challenging tasks was determined by their beliefs about

why they succeeded, or failed, at those tasks. According to Weiner,

people primarily attribute success or failure to one of four

factors: ability, effort, task

difficulty, and luck.

|

|

Internal |

External |

|

Stable |

Ability |

Difficulty |

|

Variable |

Effort |

Luck |

Later, Lyn Abramson, Lauren Alloy, and

their colleagues added a third dimension: globality.

In their clinical research, Abramson, Alloy, and their colleagues noticed that depressed individuals often made stable, internal attributions global attributions concerning their own negative outcomes -- that is, they tended to blame themselves for the bad things that happened to them, and these attributions extended to many different aspects of their lives. Now it's one thing to take responsibility for the occasional bad event. It's something else to take responsibility for every bad thing that happens to you. If you do that, you're bound to get depressed!

In their cognitive theory of depression, Abramson and Alloy proposed that a characteristic way of making causal attributions -- attributing bad events to stable, internal, global factors, was a cognitive style that rendered people vulnerable to depression in the face of a negative experience. And they developed the Attributional Style Questionnaire (ASQ) to measure this cognitive tendency. The ASQ was later revised by Christopher Peterson, and it's this form -- one for adults, another for children -- that's in use today.

The ASQ poses a number of situations, such as:

You meet a friend

who compliments you on your appearance.

Similar questions

were asked concerning other scenarios, such as:

Based on these responses, we can compute scores representing the person's tendency to make internal (Question #1), stable (#2), and global (#3) attributions -- especially in situations that are important to that individual (#4). Basically, by summing up the scores across items, and giving special weight to "important" items.

Abramson and Alloy

posited that a tendency to make internal, stable, and

global attributions, especially for important

negative events, was a risk factor for depression. Not a cause of

depression, but a risk factor. In technical

jargon, depressogenic attributional style is a

diathesis that interacts with environmental stress to

produce an episode of illness. That is, if something

important and negative happens to such a person, he or she

is likely to take responsibility for it and get depressed.

But David Cole and his colleagues at

Kelly (1972) noted that the covariation calculus cannot be applied unless there are multiple observations of behavior. That's because the covariation calculus depends on observations of covariation, and there's no variation in a single observation.

In the case of single

observations, the judge can make correspondent

inferences. Or, he can rely on causal schemata

-- abstract ideas about causality in various

domains. Based on the presence or strength of an

effect, a judge can use causal schemata to make

inferences about the cause of the effect.

Consider, for example, the domain of college admissions. There are lots of different reasons why an applicant might be admitted to college: good grades, high test scores, playing oboe, or being a champion basketball player, to name a few. None of these causes is necessary, but any one of them might be sufficient. Thus, the applicable causal schema is multiple sufficient causes. If a talented oboe player gets into Harvard despite having relatively low SATs, then we attribute this outcome to the fact that the Harvard student orchestra needs an oboe player. If he has good SATs, but got into Harvard when someone else with comparable scores did not, then we can discount his SAT scores and augment his oboe-playing.

Suppose that you hold the theory of achievement requires both high ability and serious effort. As the old joke goes, there's only one way to Carnegie Hall: you've got to have talent and you've got to practice. Thus, the applicable schema is multiple necessary causes. If a student graduates with honors, then -- if you hold this theory -- you can infer that he's both smart and studied hard. But if he studied hard and still didn't do well, then you can infer that he's just not very smart.

But suppose you hold a variant on this theory, which holds that, when it comes to academic achievement, high levels of one factor can make up for low levels of another factor. In this case, the applicable schema is compensatory causes. If a student passes a course despite not working very hard, then we infer that he must be really smart. If a student passes despite low aptitude test scores, then we infer that he must have worked really hard.

The examples discussed so far involve dichotomous effects -- admitted or rejected, honors or not, pass or fail. But, of course, sometimes outcomes vary along a continuum -- like the A-F scheme for college grades. This situation calls for the graded effects schema. Suppose that you held the view that high ability and great effort are required to get an A in a course, but high levels of one or the other would be enough to get a C or a B; if both are relatively lacking, the student will flunk. If you observe a student get an A, then you can infer both that he is smart and that he tried hard; if he gets a B, despite being smart, you infer that he didn't try very hard. If he gets a D, maybe he was lacking in both areas.

Counterfactual Reasoning in Causal Attribution

Robert Spellman has noted that causal attribution in legal and

medical cases always involves singular events, which means that

judges (by which we mean jurors can't apply the covaration

calculus either. Because any individual crime is committed

only once, and any episode of illness occurs only once, we can't

even construct a 2x2 table crossing action/no action with

outcome/no outcome. Instead, she has suggested that jurors

and physicians apply a kind of counterfactual reasoning.

To take a medical example, of reasoning about the cause of cancer in a patient:

She further suggests that, under these circumstances, people attribute responsibility by a principle of counterfactual potency:

Causal attribution sometimes involves something like a calculus, and sometimes involves something like a schema, but causal attribution can also be implicit in language.

Consider the following examples of anaphoric

reference from Garvey and Caramazza (1974):

Here is another question of anaphoric

reference, from recent work by Joshua Hartshorne:

Consider the following

problem: Ted helps Paul. Why?

Note that the problem entails a single

observation, so the covariation calculus cannot apply.

As it happens, people overwhelmingly make the causal

attribution to Ted rather than Paul. The same thing

happens with a number of other verbs, such as cheats,

attracts, and troubles.

Note that the problem entails a single

observation, so the covariation calculus cannot apply.

As it happens, people overwhelmingly make the causal

attribution to Ted rather than Paul. The same thing

happens with a number of other verbs, such as cheats,

attracts, and troubles.

On the basis of such observations, we might hypothesize that there is yet another schema for causal inference, based on the syntax of language -- to wit: Causal attribution is biased toward the grammatical subject of the sentence.

But this isn't always the

case. If the problem is changed, to Ted likes

Paul, the attribution shifts from Ted, the subject

of the sentence, to Paul, the object. This also

happens with other verbs, such as detests and notices.

But this isn't always the

case. If the problem is changed, to Ted likes

Paul, the attribution shifts from Ted, the subject

of the sentence, to Paul, the object. This also

happens with other verbs, such as detests and notices.

When Ted charms Paul, the

attribution is to Ted; but when Ted loathes Paul,

the attribution is to Paul. What makes the

difference?

When Ted charms Paul, the

attribution is to Ted; but when Ted loathes Paul,

the attribution is to Paul. What makes the

difference?

Brown and Fish noted that

the pattern of causal attributions seemed to be related

to the derivational morphology of verbs.

That's a mouthful, but the fact that it's a mouthful isn't its only problem. The real problem is that, in order to apply this syntactical schema, judges would have to consult a dictionary every time they made causal attributions!

Brown and Fish found the

solution to this problem by shifting attention from the

syntactic roles of subject and object in the

grammar of a sentence to their semantic

roles. As the UCB linguist Charles Fillmore noted,

there are two pairs of such roles:

A series of

A series of  formal experiments confirmed that

people actually use these linguistic schemata in making

causal attributions. In these experiments, Brown

and Fish constructed simple sentences such as Ted

likes Paul from behavioral action verbs and

mental-state verbs that had been randomly sampled from

the dictionary.

formal experiments confirmed that

people actually use these linguistic schemata in making

causal attributions. In these experiments, Brown

and Fish constructed simple sentences such as Ted

likes Paul from behavioral action verbs and

mental-state verbs that had been randomly sampled from

the dictionary.

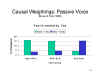

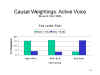

When the

sentences were worded in the active voice, as in Ted likes

Paul, the subjects attributions generally followed

predictions.

When the

sentences were worded in the active voice, as in Ted likes

Paul, the subjects attributions generally followed

predictions.

This was also true when the

subjects were worded in the passive voice, as in Paul

is liked by Ted.

This was also true when the

subjects were worded in the passive voice, as in Paul

is liked by Ted.

And also when the rating scale was

replaced by a free list of reasons, where subjects could

write about either Ted or Paul.

And also when the rating scale was

replaced by a free list of reasons, where subjects could

write about either Ted or Paul.

Finally, Brown and Fish employed a

quite different procedure, in which subjects read

sentences such as Ted likes Paul and then had to

make rating of distinctiveness and consensus:

Finally, Brown and Fish employed a

quite different procedure, in which subjects read

sentences such as Ted likes Paul and then had to

make rating of distinctiveness and consensus:

Applying the Stimulus-Experiencer schema, subjects rated the scenario higher on consensus, which would yield attributions to the Target.

These linguistic schemata have important implications for the Fundamental Attribution Error, to be discussed below. We do not generally attribute causality to the actor. Instead, we attribute causality to the agent, or the stimulus, or the experiencer. In other words, the Fundamental Attribution Error is fundamentally misframed.

In any event, these linguistic schemata indicate that causal attributions are associated with the semantic structure of language.

The implication of this conclusion is that someone ought to re-do the McArthur study, with careful attention to the different semantic roles of Agent and Patient, and Stimulus and Experiencer, and determine the extent to which linguistic schemata alter the application of the covariation calculus. If you do this experiment, remember: You read it here first!

Brown and

Fish considered, further, whether the linguistic

schemata reflected a "Whorffian" result -- that is,

whether the linguistic schemata implied that thought (in

this case, causal attributions) was structured,

controlled, or influenced by language (in this case,

semantics). They argued that the linguistic

schemata were not Whorffian in nature. The reason

for this is that English derives adjectives from verbs

by adding one or another of a relatively small set of

suffixes, such as -ful and -some.

Brown and

Fish considered, further, whether the linguistic

schemata reflected a "Whorffian" result -- that is,

whether the linguistic schemata implied that thought (in

this case, causal attributions) was structured,

controlled, or influenced by language (in this case,

semantics). They argued that the linguistic

schemata were not Whorffian in nature. The reason

for this is that English derives adjectives from verbs

by adding one or another of a relatively small set of

suffixes, such as -ful and -some.

So, the existence of linguistic schemata for causal attribution appear to be a contra-Whorffian result, in which thought -- something like the Fundamental Attribution Error, perhaps, but not exactly -- is structuring language.

Language and Social InteractionThese linguistic effects on social judgment remind us of the intimate relationship between language and social interaction. Viewed strictly from a cognitive point of view (as Noam Chomsky might, for example), language is a tool of thought -- a powerful means of representing and manipulating knowledge. But from a social point of view, language is a similarly powerful tool for communication -- for expressing one's thoughts, feelings, and desires. Without language, our social interactions would be much different. Which raises the question of the effect of language on social interaction -- another aspect of the Whorffian hypothesis, I suppose. Do social interactions differ depending on the language in which they are conducted? Maybe. Sylvia Chen and Michael Bond (2010), two psychologists at Hong Kong Polytechnic University, conducted an interview study of personality in native Chinese who were fluent in English. When the students were interviewed in English, as opposed to Cantonese, these subjects appeared more extraverted, assertive, and open to new experiences. The investigators speculated that speaking a language primes the speaker to express the features of personality stereotypically associated with native speakers of that language. |

Like Norman Anderson's cognitive algebra, Kelley's covariation calculus for causal attribution illustrates normative rationality in social judgment and decision-making.

According to the

normative model of human judgment and decision-making:

One aspect of normative rationality is a reliance on algorithms for reasoning. Algorithms are cognitive "recipes" for combining information in the course of reasoning, judgment, choice, decision-making, and problem-solving. When appropriately applied, they are guaranteed to yield the correct answer to a problem. The covariation calculus is, in a sense, an algorithm for causal reasoning -- a recipe that will always yield the logically correct causal explanation.

To some extent, it is clear that people do follow these normative rules -- which is why the covariation calculus has been confirmed experimentally. However, in other respects they appear to depart systematically from them, causing errors and biases to occur in social judgment.

Some

of these errors and biases stem from the very nature of

social judgment, which is that it frequently takes place

under conditions of uncertainty:

But there are other instances where people depart from normative rationality even though there is enough information available to permit them to apply reasoning algorithms such as the covariation calculus, resulting in systematic biases in causal attribution.

Chief among these errors

is what has come to be known as the Fundamental

Attribution Error (FAE; Ross, 1977) -- the tendency for

people to overestimate the role of dispositional

factors, and underestimate the role of situational

factors, in making causal attributions.

As with so many other topics in social psychology, the FAE was first noticed by Heider:

Changes in the environment are almost always caused by acts of persons in combination with other factors. The tendency exists to ascribe the changes entirely to persons (Heider, 1944, p. 361).

Here is Ross's statement of the effect, in his classic review of "The Intuitive Psychologist and His Shortcomings":

[T]he intuitive psychologist's shortcomings...start with his general tendency to overestimate the importance of personal or dispositional factors relative to environmental influences.... He too readily infers broad personal dispositions..., overlooking the impact of relevant environmental forces and constraints (Ross, 1977, p. 183).

And another statement, from a book written by Ross with Richard Nisbett, Human Inference: Strategies and Shortcomings of Social Judgment:

[T]he tendency to attribute behavior exclusively to the actor's dispositions and to ignore powerful situational determinants of the behavior. Nisbett & Ross (1980, p. 31)

Ross found

evidence of the FAE in Jones and Harris' (1967) studies

employing the attitude attribution paradigm.

Recall that the subjects in this experiment tended to

attribute debater's favorable statements about Castro

(and, to a lesser degree, racial segregation) to their

favorable attitudes toward these objects -- despite

their knowledge that the debaters had no choice in the

position they took. They ignored this situational

information, and made a causal attribution to the

debaters' attitudinal dispositions.

He also found it in McArthur's study of the covariation calculus.

For

example, in a control condition, people were asked to

make causal attributions in the absence of any

information about consistency, distinctiveness, or

consensus. Under these conditions, they probably

should have made their attributions randomly (setting

aside the linguistic schemata, which nobody knew about

at the time). However, the judges made more

attributions to the actor than to the situation.

McArthur also used ANOVA to

estimate the proportion of variance in causal

attributions that could be attributed to each kind of

information. Overall, the judges relied more on

consistency information, which drives attributions

toward the Actor, than consensus information, which

would drive attributions toward the Situation.

McArthur also used ANOVA to

estimate the proportion of variance in causal

attributions that could be attributed to each kind of

information. Overall, the judges relied more on

consistency information, which drives attributions

toward the Actor, than consensus information, which

would drive attributions toward the Situation.

Subsequent research has

identified a number of sources of the FAE:

Now-classic studies uncovered a number of attributional errors, apparently reflecting systematic biases in the attributional process.

In the Actor-Observer

Difference in Causal Attribution (Jones &

Nisbett, 1972), also known as the Self-Other Difference,

people tend to attribute other peoples' behavior to

their dispositions, but their own behavior to the

situation.

The person tends to attribute his own reactions to the object world, and those of another, when they differ from his own, to personal characteristics [of the other]. Heider (1958, p. 157)

[T]here is a pervasive tendency for actors to attribute their actions to situational requirements, whereas observers tend to attribute the same actions to stable personal dispositions. Jones & Nisbett (1972, p. 80)

The flavor of the Actor-Observer difference can be given in the following anecdote from Jones and Nisbett (1972, p. 79):

When a student who is doing poorly... discusses his problems with a[n] adviser, there is often a fundamental difference of opinion between the two. The student... is usually able to point to environmental obstacles such as a particularly onerous course load, to temporary emotional stress..., or to a transitory confusion about life goals.... The adviser... is convinced...instead that the failure is due to enduring qualities of the student -- to lack of ability, to irremediable laziness, to neurotic ineptitude.

Notice that the Actor-Observer Difference sets limits on the Fundamental Attribution Error. That is to say, we make the Fundamental Attribution Error when explaining the behavior of other people, but not when explaining our own behavior.

Like the Fundamental Attribution Error, a tremendous amount of attention has been given by social-cognition researchers to the Actor-Observer Difference. One explanation of the Actor-Observer Difference is that actors have more information about the causes of their own behavior than do observers. Presumably, if perceivers had as much information about the behavior of another person as they do about their own behavior, they wouldn't make the Fundamental Attribution Error in the first place.

Nevertheless,

subsequent research (reviewed by Watson, 1982) indicated

that the Actor-Observer difference is actually rather

weak.

We will return to this issue later.

In the Self-Serving

Bias in Causal Attribution (Hastorf, Schneider,

& Polefka, 1970), also known as the Ego Bias, the

Ego-Defensive Bias, the Ego-Protective Bias, or just

plain Beneffectance (Greenwald, 1980), people tend to

take responsibility for positive outcomes, but not for

negative outcomes.

That reason is sought that is personally acceptable. It is usually a reason that flatters us, puts us in a good light, and it is imbued with an added potency by the attribution. Heider (1958, p. 172)

We are prone to alter our perception of causality so as to protect or enhance our self esteem. We attribute success to our own dispositions and failure to external forces. Hastorf, Schneider, & Polefka (1970, p. 73)

Again, the self-serving bias may be illustrated by an academic anecdote:

In asking students to judge an examination's quality as a measure of their ability to master course material, I have repeatedly found a strong correlation between obtained grade and belief that the exam was a proper measure. Students who do well are willing to accept credit for success; those who do poorly, however, are unwilling to accept responsibility for failure, instead seeing the exam (or the instructor) as being insensitive to their abilities (Greenwald, 1980, p. 604).

And maybe by this observation about the power of prayer (from a letter to the editor by Charles F. Eikel, New York Times Magazine, November 2004):

Claims of speaking with God or hearing from God... are made by the same people who, when things go bad, say, "It was God's will", and when they go well, "My prayers were answered".

Much as the Actor-Observer Difference sets limits on the Fundamental Attribution Error, the Self-Serving Bias sets limits on the Actor-Observer Difference. That is, we explain our failures by making external attributions, but we explain our successes by making internal attributions. Of course, those internal attributions presumably entail the Fundamental Attribution Error.

An early review of the

empirical evidence for the Self-Serving Bias was mixed

(Miller & Ross, 1975):

We shall have more to say about these claims of attributional bias later in the supplement.

But claims that causal

attributions and other aspects of social judgment were

riddled with errors and biases led to the development of a

large industry within social cognition, documenting a

large number of ostensible departures from normative

rationality. This list was produced by Kruger and

Funder in 2004. A quick Google search on "Cognitive

Errors" will turn up dozens more errors ostensibly

infecting decision-making, belief, probability judgments,

social behavior, and memory.

What accounts for these errors and biases?

One view is that they reflect a set of conditions collectively known as judgment under uncertainty. Under these circumstances, when algorithms cannot be applied, people rely on judgment heuristics: shortcuts, or "rules of thumb", that bypass the logical rules of inference. Judgment heuristics permit judgments to be made under conditions of uncertainty, but they also increase the likelihood of making an error in judgment.

Analysis of errors in judgment by Daniel Kahneman (formerly of UC Berkeley), the late Amos Tversky (of Stanford University) and others has revealed a number of heuristic principles that people apply when making both social and nonsocial judgments. Kahneman shared the Nobel Prize in Economics for his work on judgment heuristics and other aspects of judgment and decision-making; Tversky did not share the prize as well, because by the time it was awarded he had died from cancer, and the Nobel prizes are not given posthumously.

Four of the

best-known judgment heuristics are:

|

For an an overview of judgment heuristics, see the General Psychology lecture supplements on Thinking. |

These judgment

heuristics can be employed in making causal

attributions:

An

interesting demonstration of availability in causal

attributions is provided by an experiment by Taylor and

Fiske (1975) employing a version of the "getting

acquainted paradigm" where a single real subject

interacted with two other individuals who in fact were

confederates of the experimenter. The situation

was arranged so that the subject faced one confederate

or the other, or was seated at right angles to the

confederates. After the trio performed a group

task, the subject was asked to assign responsibility for

the outcome.

An

interesting demonstration of availability in causal

attributions is provided by an experiment by Taylor and

Fiske (1975) employing a version of the "getting

acquainted paradigm" where a single real subject

interacted with two other individuals who in fact were

confederates of the experimenter. The situation

was arranged so that the subject faced one confederate

or the other, or was seated at right angles to the

confederates. After the trio performed a group

task, the subject was asked to assign responsibility for

the outcome.Taylor and Fiske suggested that causal attributions were "top of the head" phenomena, and not the products of the systematic thought implied by the covariation calculus.

A Confirmatory Bias in Hypothesis-Testing?

A similar story can

be told about another frequently cited

error: the confirmatory bias in

hypothesis- testing. In the context

of causal attribution, you can think of

"Something About the Person" as a causal

hypothesis to be tested by collecting

further evidence.

Inspired by a classic study of the "Triples Task" by Johnson-Laird (1960), Snyder (1981a, 1981b) proposed that social judgment was characterized by an inappropriate (and irrational) confirmatory bias.

Testing Logical Hypotheses

In their experiment, Wason and Johnson-Laird presented subjects with cards displaying three numbers, such as 2 - 4 - 6. they were told that the number string conformed to a simple rule, and that their task was to infer this rule. They were to test their hypotheses by generating new strings of three numbers, and would receive feedback as to whether their string conformed to the rule. According to Wason and Johnson-Laird, a typical trial would go something like this:

Subject |

Experimenter |

| 8 - 10 - 12 | Yes, that conforms |

| 14- 16 - 18 | Yes |

| 20 - 22 - 24 | Yes |

| Hypothesis: Add 2 to the preceding number | No, that is incorrect |

| 2 - 6 - 10 | Yes, that conforms |

| 1 - 50 - 99 | Yes |

| Hypothesis: The second number is the arithmetic mean of the 1st and 3rd numbers | No, that is incorrect |

| 3 - 10 - 17 | Yes, that conforms |

| 0 - 3 - 6 | Yes |

| Hypothesis: Add a constant to the preceding number | No, that is incorrect |

| 1 - 4 - 9 | Yes, that conforms |

| Hypothesis: Any three numbers, ranked in increasing order of magnitude | Yes, that is correct! |

Johnson-Laird's account of

the modal subject in this experiment goes

like this:

A logical approach would construct pairs of alternative hypotheses, so that the subject would offer a sequence that would prove one hypothesis and simultaneously disprove the other.

Something very similar occurs in a variant on the "triples" task, now known as the "Card Task" -- employed by Wason and Johnson-Laird (1968) for the study of formal (logical) hypothesis-testing, in which categorical statements can be disconfirmed by a single counterexample. In the Card Task, subjects are shown four cards, each of which has a letter on one side and a number on the other. The faces shown to the subject might be

| A | M | 6 | 3 |

The subject is then given a rule, such as:

| P | Q |

| If there is a vowel on one side, | Then there is an even number on the other side. |

The subject is then asked to select cards to turn over to determine whether the rule is correct.

Wason and Johnson-Laird

reported that the modal subject either

selected the A card alone, or else

the A card and the 6

card. However, this is logically

incorrect.

Philosophers of science such as Karl Popper and Paul Lakatos have made compelling arguments favoring a disconfirmatory strategy for scientific hypothesis-testing. That doesn't mean that scientists always do it, and in fact many of them (including myself) don't do it very often at all. But it does mean that, technically, the disconfirmatory strategy is far to be preferred, on strictly logical grounds, to a confirmatory (or verificationist) strategy.

In both cases, it seems that

the subjects are employing a confirmatory

strategy: confirming whether the sequence

adds 2 to the previous number (or

whatever), or confirming whether vowels

have even numbers, and even numbers have

vowels. And, in fact, the literature on

human judgment indicates that the

intuitive hypothesis-tester does seem to

be prone to a confirmation bias.

Social Hypothesis-Testing

It's this kind of strategy that Snyder and his colleagues thought they observed in social cognition.

Consider an experiment by

Snyder and Swann (1978), employing the

"getting acquainted" paradigm.

First, they presented subjects with a

profile of the "prototypical" extravert or

introvert. then they gave subjects a

pool of 26 questions, from which they were

to select 12 to test the hypothesis that a

target was extraverted or

introverted. The questions were

distributed as follows:

This bias was robust in face of a number of manipulations:

In this light, consider

an experiment by Trope and Bassok (JPSP

1982).

Trope and Bassok then

tested for three strategies.

Moreover,

in a further experiment by Trope and

Alon (JPers 1984), subjects

were given the task to discover

whether a target was introverted or

extraverted, but were free to generate

their own questions, rather than

forced to select from a pool of

questions supplied by the

experimenter. The subjects'

questions were then coded:

The result was that there was little evidence of biased questioning, suggesting that the Snyder studies may have forced subjects to do something that they wouldn't ordinarily do. In fact, 73% of the questions were coded as non-directional, with the remainder roughly balanced between consistent and non-consistent.

So, it turns out that the Snyder studies don't provide good evidence for a confirmatory strategy in hypothesis-testing, which motivates us to examine the whole claim a little more closely. It turns out that the confirmatory bias is not all it's cracked up to be: it's not really that all-pervasive, it's not really confirmatory in nature, and it's not really a bias.

Logical Hypothesis-testing Revisited

Consider a conceptual

replication and extension of the "Card

Task" by Johnson-Laird et al.

(1972). In this study, subjects

were given a logical hypothesis of the

form if P then Q -- which, of

course is logically tested by

selecting P and Not Q.

To be fair, it's clear that subjects don't always test hypotheses properly. They don't always handle abstract representations of problems the way they should. They tend to ignore p(evidence | hypothesis is untrue). But in familiar domains, when the structure of the task is made clear to them, they are given a logical hypothesis to test against an alternative, given information about diagnosticity, (or permitted to formulate their own questions), subjects aren't nearly as irrational, illogical, or stupid as some people like to think they are.

The broader point is that people may behave normatively when they understand the task they have been given. When they don't understand the task, it seems hardly fair for us to criticize them for behaving inappropriately. The general principle here is that, when evaluating performance, we need to understand the experimental situation from the subject's point of view. Until we have such an analysis, we probably should hold off on drawing conclusions about bias and irrationality.

Is a Confirmatory Bias Always Bad?

Just to make muddy the waters further, it's not clear that a confirmatory bias is always a bad way to test a hypothesis.

Consider one more analysis, by Klayman and Ha (1987), on rule-discovery as hypothesis testing. They asked subjects to consider a set of objects which differed from another set in some unspecified way, and gave them the task of finding a rule that will exactly specify the members of the target set. Thus, effectively, subjects were asked to test a hypothesis concerning a rule, by making a prediction about whether a given object is in the target set. This is essentially what goes on in concept-identification, and it's also what goes on in the "Triples Task".

Anyway, K&H looked

for two kinds of hypothesis-tests:

Consider the wide

variety of relations between the

hypothesized rule and the correct

rule in hypothesis-testing

situations:

Under conditions such as these, unambiguous falsification is impossible, and the positive test strategy is still preferable under many conditions.

This is especially true when you consider the costs associated with the positive and negative test strategies. Consider a real-world variant: if a student gets better than 1300 on the GRE, then s/he will be a good graduate student. If you really want to test the hypothesis using the prescribed negative test strategy, then you would need to admit some poor graduate students (Not Q). But the cost of admitting poor students to graduate school is simply too great to bear.

The bottom line is similar to the lessons drawn concerning judgment heuristics. From a strictly prescriptive point of view, disconfirmation should be the goal of all hypothesis-testing. And, in formal terms, the best route to the goal of disconfirmation is a negative test strategy. But the negative test strategy may be impossible in probabilistic environments, where disconfirmation is not informative. And even when the negative test strategy is possible, it can be very expensive, and makes considerable demands on cognitive capacity.

For these reasons, Klayman and Ha called for a re-evaluation of "confirmation bias". They noted that a number of phenomena, fall under the rubric of a positive test strategy: rule discovery, concept identification, general hypothesis-testing (like the Card Problem), intuitive personality testing, judgments of contingency, and trial-and-error learning. In each of these cases, the positive test strategy is a generally useful heuristic, even if it is normatively wrong.

So the

"confirmatory bias in

hypothesis testing" isn't

really a confirmatory bias

after all.

Judgment heuristics, and our biases in hypothesis testing, and the like seem to undermine the assumption, popular in classical philosophy and early cognitive psychology, that the decision-maker is logical and rational -- has an intuitive understanding of statistical principles bearing on sampling, correlation, and probability, follows the principles of formal logic to make inferences, and makes decisions according to the principles of rational choice.

In fact, psychological research shows that when people think, solve problems, make judgments, decisions, and choices, they depart in important ways from the prescriptions of normative rationality. These departures, in turn, challenge the view of humans as rational creatures -- or do they?

The basic functions of learning, perceiving, and remembering depend intimately on judgment, inference, reasoning, and problem solving. In this lecture, I focus on these aspects of thinking. How do we reason about objects and events in order to make judgments and decisions concerning them?

According to the normative model of human judgment and decision making, people follow the principles of logical inference when reasoning about events. Their judgments, decisions, and choices are based on a principle of rational self-interest. Rational self-interest is expressed in the principle of optimality, which means that people seek to maximize their gains and minimize their losses. It is also expressed in the principle of utility, which means that people seek to achieve their goals in as efficient a manner as possible. The normative model of human judgment and decision making is enshrined in traditional economic theory as the principle of rational choice. In psychology, rational choice theory is an idealized description of how judgments and decisions are made.

But in these lectures, we noted a number of departures from normative rationality, particularly with respect to attributions of causality.

These

effects

seem to undermine the popular assumption of

classical philosophy, and early cognitive

psychology, that humans are logical, rational

decision makers -- who intuitively understand

such statistical principles as sampling,

correlation, and probability, and who

intuitively follow normative rules of inference

to make optimal decisions.

But do the kinds of effects documented here really support the conclusion that humans are irrational? Not necessarily. Normative rationality is an idealized description of human thought, a set of prescriptive rules about how people ought to make judgments and decisions under ideal circumstances. But circumstances are not always ideal. It may very well be that most of our judgments are made under conditions of uncertainty, and most of the problems we encounter are ill-defined. And even when they're not, all the information we need may not be available, or it may be too uneconomical to obtain it. Under these circumstances, heuristics are our best bet. They allow fairly adaptive judgments to be made. Yes, perhaps we should appreciate more how they can mislead us, and yes, perhaps we should try harder to apply algorithms when they are applicable, but in the final analysis:

It is rational to inject economies into decision making, so long as you are willing to pay the price of making a mistake.

Human beings are rational after all, it seems. The problem, as noted by William Simon, is that human rationality is bounded. We have a limited capacity for processing information, which prevents us from attending to all the relevant information, and from performing complex calculations in our heads. We live with these limitations, but within these limitations we do the best we can with what we've got. Simon argues that we can improve human decision-making by taking account of these limits, and by understanding the liabilities attached to various judgment heuristics. But there's no escaping judgment heuristics because there's no escaping judgment under uncertainty, and there's no escaping the limitations on human cognitive capacity.

Simon's viewpoint is well expressed in his work on satisficing in organizational decision-making -- work that won him the Nobel Memorial Prize in Economics. Contrary to the precepts of normative rationality, Simon observed that neither people nor organizations necessarily insist on making optimal choices -- that is, always choosing that single option that most maximizes gains and most minimizes losses. Rather, Simon showed that organizations evaluate all the alternatives available to them, and then identify those options whose outcomes are satisfactory (hence the name, satisficing). The choice among these satisfactory outcomes may be arbitrary, or it may be based on non-economic considerations. But it rarely is the optimal choice, because the organization focuses on satisficing, not optimizing.

Satisficing often governs job assignments and personnel selection. Otherwise, people might be overqualified for their jobs, in that they have skills that are way beyond what is needed for the job they will perform.

Satisficing also seems to underlie affirmative action programs. In affirmative action, we create a pool of candidates, all of whom are qualified for a position. But assignment to the position might not go to the candidate with the absolutely "highest" qualifications. Instead, the final choice among qualified candidates might be dictated by other considerations, such as an organizational desire to increase ethnic diversity, or to achieve gender or racial balance. Affirmative action works so long as all the candidates in the pool are qualified for the job.

Another way of stating Simon's principles of bounded rationality and satisficing is with the idea of "fast and frugal heuristics" proposed by the German psychologist Gerd Gigerenzer.

The bottom line in the study of cognition is that humans are, first and foremost, cognitive beings, whose behavior is governed by percepts, memories, thoughts, and ideas (as well as by feelings, emotions, motives, and goals). Humans process information in order to understand themselves and the world around them. But human cognition is not tied to the information in the environment.

We go beyond the information given by the environment, making inferences in the course of perceiving and remembering, considering not only what's out there but also what might be out there, not only what happened in the past but what might have happened.

In other cases, we don't use all the information we have. Judgments of categorization and similarity are made not by a mechanistic count of overlapping features, but also by paying attention to typicality. We reason about all sorts of things, but our reasoning is not tied to normative principles. We do the best we can under conditions of uncertainty.

In summary, we cannot understand human action without understanding human thought, and we can't understand human thought solely in terms of events in the current or past environment. In order to understand how people think, we have to understand how objects and events are represented in the mind. And we also have to understand that these mental representations are shaped by a variety of processes -- emotional and motivational as well as cognitive -- so that the representations in our minds do not necessarily conform to the objects and events that they represent.

Nevertheless, it is these representations that determine what we do, as we'll see more clearly when we take up the study of personality and social interaction.

The bottom line is that, precisely because they inject economies into the judgment process, the use of judgment heuristics does not necessarily reflect a departure from normative rationality. So long as we are willing to pay the price of error, the use of what Gigerenzer calls "fast and frugal heuristics" can be very rational indeed -- because it enables us to make everyday judgments quickly, with little effort -- and mostly correctly.

On

the

other hand, some psychologists have taken the

position that judgment heuristics and biases are

not "rational" shortcuts, but rather the tools

of lazy reasoners. I have sometimes called

these theorists the "People Are Stupid" School

of Psychology, also PASSP, PeopleAreStupidism,

or simply Stupidism (Kihlstrom, 2004) -- a

school of psychology that occupies a place in

the history of the field alongside the

structuralist, functionalist, behaviorist, and

Gestalt "schools".

To the extent that it is really

a trend in social psychology -- and I'm not

entirely sure that it is -- "People Are

Stupid"-ism appears to have a number of

sources:

All of this

would be well and good, I suppose, if it were

actually true that social (and nonsocial)

cognition is riddled with error and bias; that

automatic processes dominate conscious ones;

and that free will is an illusion. But

it's not necessarily the case.

In

particular, the Actor-Observer Difference has

recently been challenged by Malle (2006), who

provided the first comprehensive review of this

literature since Watson (1982). In

contrast to Watson, who offered a narrative

review of a selected subset of the literature

available to him, Malle performed a quantitative

meta-analysis of 173 published studies.

For each, he calculated a bias score

representing the extent of the Actor-Observer

difference:

The results were the same

when Malle looked at a subset of studies

involving positive and negative events.

Here, there was evidence for a weak

bias toward external attributions for negative

events and internal attributions for positive

events -- but the bias was very weak.

And, if you think about

it, there wasn't much evidence for the

fundamental attribution error. If there

is little or no actor-observer difference in

causal attribution, then people make

attributions about others the same way they

make attributions about themselves. And

these attributions appear to consist of a

nicely balanced mix of the internal and the

external.

So, why all this

attention to the Fundamental Attribution

Error, the Actor-Observer Difference, and the

like? Apparently, it takes a long time

to correct the record. In another

analysis, Malle plotted the magnitude of the

Actor-Observer difference as a function of

when the study was published.

In the final analysis, it may be that the classic literature on causal attribution -- the literature that dominates most lectures and textbooks is based on a misconception concerning causal relations in social interaction.

In the first place, consider what started it all: What E.E. Jones called "Lewin's grand truism",

B = f(P, E).

Heider took this scientific statement about the actual causes of behavior as a framework for thinking about the naive scientist's analysis of phenomenal or perceived causality. Just as traditional personality and social psychologists attributed causality to the person (among personality psychologists) or the environment (among social psychologists), Heider assumed that the naive psychologist did pretty much the same thing.

But this argument assumes

that P and E are independent of each other -- which

was not Lewin's idea at all!.

Lewin's view was that P and E were interdependent,

and that together they constituted a single

field, which he sometimes called the Life Space.

Moreover,

according to the Doctrine

of Interactionism, we now understand that P constructs

E through his or her behavior (evocation,

selection, and behavioral manipulation), and

through his or her mental activity (cognitive

transformation).

Therefore, considering "Lewin's

grand truism" and the Doctrine of

Interactionism, it appears that Heider -- and

especially all the social psychologists who

followed his lead -- made a false

distinction between the person and the

environment.

The temptation is to say that we should start the study of causal attribution all over again, from the beginning, abandoning the false distinction between the person and the environment and embracing the implications of the Doctrine of Mentalism and the Doctrine of Interactionism -- and the psychological argument that it's not the situation that causes behavior, but the perception of the situation.

Just such a re-start has

been attempted by Bertram Malle (2005), in

what he calls a folk-conceptual theory

of causal attribution. In this work,

Malle abandons the Heiderian model of the

social perceiver as naive scientist, and seeks

to understand how ordinary people actually

reason about behavior. After all, that's

what social cognition tries to understand: how

people -- folk -- think about

themselves and other people.

Malle argues that the

folk-conceptualization of causality makes a

distinction between two kinds of behaviors:

The reasons people give for

voluntary actions typically form a combination

of belief and desire. In the

folk-conceptualization of causality, mental

states of belief and desire are necessary

precursors to intentional action.

The actor's mental state is

often marked linguistically.

The distinction between marked

and unmarked mental states is important,

because the absence of explicit markers for

the actor's beliefs and desires can confuse

internal with external causes.

While reasons apply only to

intentional acts, causes can apply to any

physical event, whether it is behavioral or

not. Malle argues that causes can be

arrayed on several dimensions corresponding to

the dimensions familiar from the discussion

above:

Whether the behavior is

explained by a cause or a reason, the outcome

is often controlled by the presence of factors

that mediate between the cause and the effect:

Beliefs and desires, in turn,

may be explained in terms of causes that make

no assumption of subjectivity or

rationality. These causal

antecedents of reasons may come in many

forms, for example:

Malle doesn't offer anything like Kelley's covariation calculus for causal attribution, but he does provide flowcharts that trace the logic of folk explanation.

Here's what the

flowchart looks like as a whole:

As persuasive as Malle's analysis of the folk conception of causation might be, it is an empirical question whether it alters our understanding of causal attribution.

Malle and his colleagues (2007) performed a number of studies in which they tested the implications of the folk-conception of causality against the traditional P-E framework.

Recall that the

traditional framework simply holds that actors

tend to attribute their behavior to external

causes (situational factors), while observers

tend to attribute actors' behavior to internal

causes (like personality dispositions).

By contrast, Malle's revisionist analysis

makes an entirely different, and more subtle,

set of predictions:

Across the nine studies,

Malle et al. (2007) found little evidence for

the traditional Actor-Observer asymmetries,

but considerable evidence for the asymmetries

predicted by the folk-conceptual theory.

Malle's work suggests that the folk-conceptual theory offers a powerful framework for understanding how real people actually explain social behavior in the real world.

The traditional P-E framework is

inappropriate, for a number of reasons:

In this respect, it might be said that, because it ignores the Doctrine of Mentalism, the traditional P-E framework is a distinctly unpsychological psychological theory of causal attribution.

And it also ought to be said that, precisely because it invokes the Doctrine of Mentalism, the folk-conceptual theory is better psychology.

Note, too, that beliefs, feelings, and desires are internal states and dispositions of the actor.

Therefore,

The Fundamental Attribution Error is not an error! But it is fundamental!

| For an excellent overview of

traditional attribution theory, see Causal

Attribution: From Cognitive Processes to

Collective Beliefs (1989) by Miles

Hewstone, a British psychologist. |

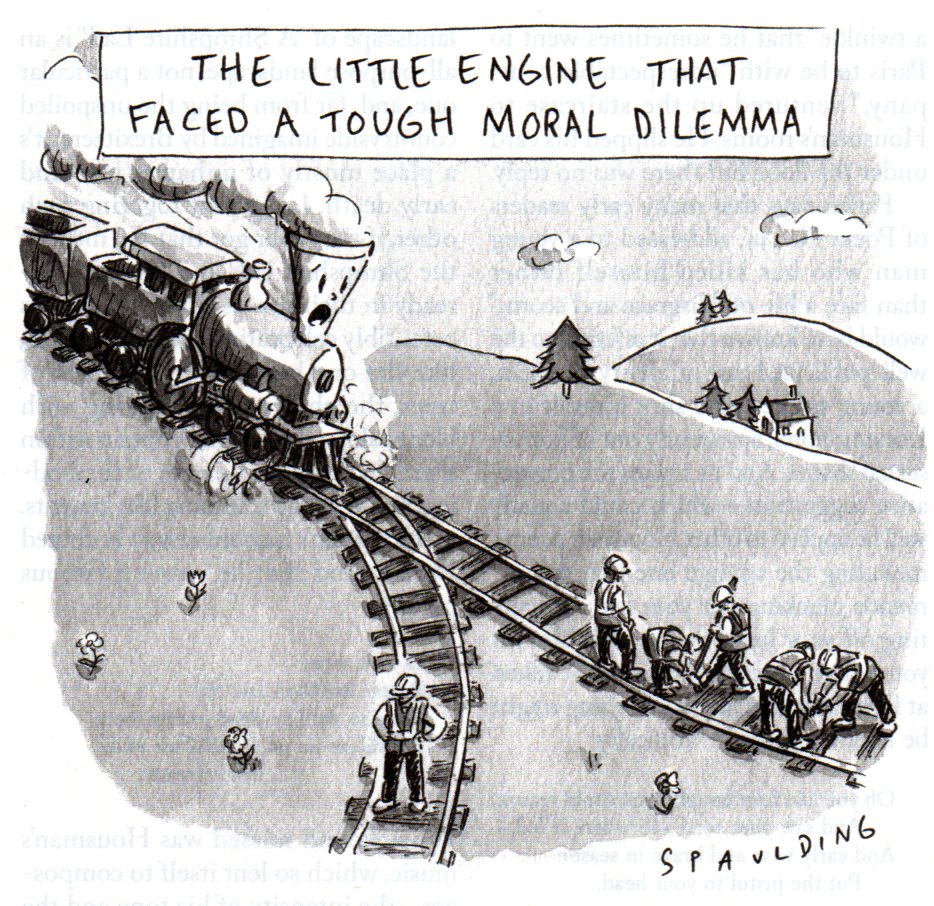

Moral Judgment

Social interaction involves at least three

cognitive tasks.

If the causal attribution involves factors that are internal to the person, as opposed to external in the situation, the actor often faces a fourth task: